Design Methods

Chapter 2 — Principles, Failure Analysis, and Selection Logic

2.1 Executable Design Principles

Effective router security configuration is grounded in a set of actionable, verifiable principles rather than abstract guidelines. Each principle below includes the condition under which it applies, the security basis that justifies it, and an implicit acceptance criterion that can be tested. These principles collectively form the foundation of the configuration baseline described throughout this guide.

| # | Principle | Condition | Security Basis | Acceptance Criterion |

|---|---|---|---|---|

| 1 | Deny-by-default on management plane | Any router with remote admin access | Least exposure | Port scan from non-ops networks shows all mgmt ports closed |

| 2 | Encrypt all management protocols | Always — no exceptions | Confidentiality & integrity | SSHv2/HTTPS/TLS1.2+, SNMPv3 authPriv only; Telnet/HTTP disabled |

| 3 | Central AAA with RBAC | Multi-operator organizations | Least privilege / audit trail | Least-privileged role cannot modify routing policies; all privileged commands appear in SIEM |

| 4 | Local break-glass with strict constraints | AAA dependency exists | Recoverability | Single emergency account; restricted source IP; rotated per policy; every use generates SIEM alert |

| 5 | Control-plane adjacency whitelist | Dynamic routing protocols in use | Anti-hijack | Unauthorized peer cannot establish adjacency; rogue peer attempt logged |

| 6 | Routing authentication everywhere feasible | OSPF/IS-IS/BGP in use | Session integrity | Auth failures = 0 in steady state; neighbor test with wrong key fails |

| 7 | Route policy is mandatory | Any dynamic routing protocol | Prevent leak/hijack | Injected invalid prefix rejected; route policy unit tests pass |

| 8 | CoPP/CPP baseline | All routers | Availability under attack | Flood test: CPU <60% sustained; no adjacency resets |

| 9 | Data-plane anti-spoof | All edge interfaces | Stop spoofed traffic | Spoofed source packets dropped ≥99.9%; uRPF or ACL-based validation in place |

| 10 | Separation of duties in change management | All production changes | Operational risk reduction | Author ≠ approver; rollback plan documented in every change ticket |

| 11 | Config immutability mindset | All managed routers | Reproducibility | Templates + diff review; ad-hoc drift detected and alerted within 24h |

| 12 | Log everything important, alert selectively | All routers with SIEM | Auditability | 100% log coverage; alert tuning reduces false positives; retention ≥180 days |

2.2 Failure Causes & Recommendations

The following table documents the most common failure mechanisms observed in router security deployments, their root causes, business impact, and actionable recommendations. Each entry includes a verification method to confirm the recommendation has been correctly implemented. Understanding these failure patterns is essential for both initial design and ongoing security reviews.

| Failure Mechanism | Typical Root Cause | Impact | Recommendation | Verification |

|---|---|---|---|---|

| Internet-exposed SSH | Convenience / no OOB available | Credential brute force, takeover | OOB/VPN + allowlist + MFA; block Internet mgmt entirely | External scan shows closed ports |

| Telnet/HTTP enabled | Legacy defaults not cleaned up | Credential theft in transit | Disable legacy; enforce SSH/HTTPS only | Config audit; protocol scan |

| SNMPv2c public strings | Poor baseline / copy-paste | Recon + config read | SNMPv3 authPriv only; restrict to ops subnet/VRF | SIEM check + SNMP scan |

| "permit any" route policy | Misunderstood BGP defaults | Route leak / hijack | Prefix/AS filters + RPKI; explicit permit only | Route policy unit tests |

| No max-prefix | Missing guardrail | Memory/CPU exhaustion | Set max-prefix + warning threshold + action | Neighbor test with simulated routes |

| No CoPP | Default config left unchanged | CPU DoS, adjacency resets | Implement CoPP class map with per-protocol rate limits | Controlled flood test |

| No uRPF/anti-spoof | Edge not hardened | Reflection/amplification abuse | uRPF strict/loose by topology; ACL-based where uRPF not feasible | Spoof packet test |

| No rollback/confirm | Manual changes without safety net | Extended outage | Commit-confirm / scheduled rollback; change drill quarterly | Rollback drill success |

| Unsynced time | No NTP configured or blocked | Forensics impossible; log correlation fails | NTP with auth; monitor offset; alert at 500ms | Offset alarm test |

2.3 Core Design & Selection Logic

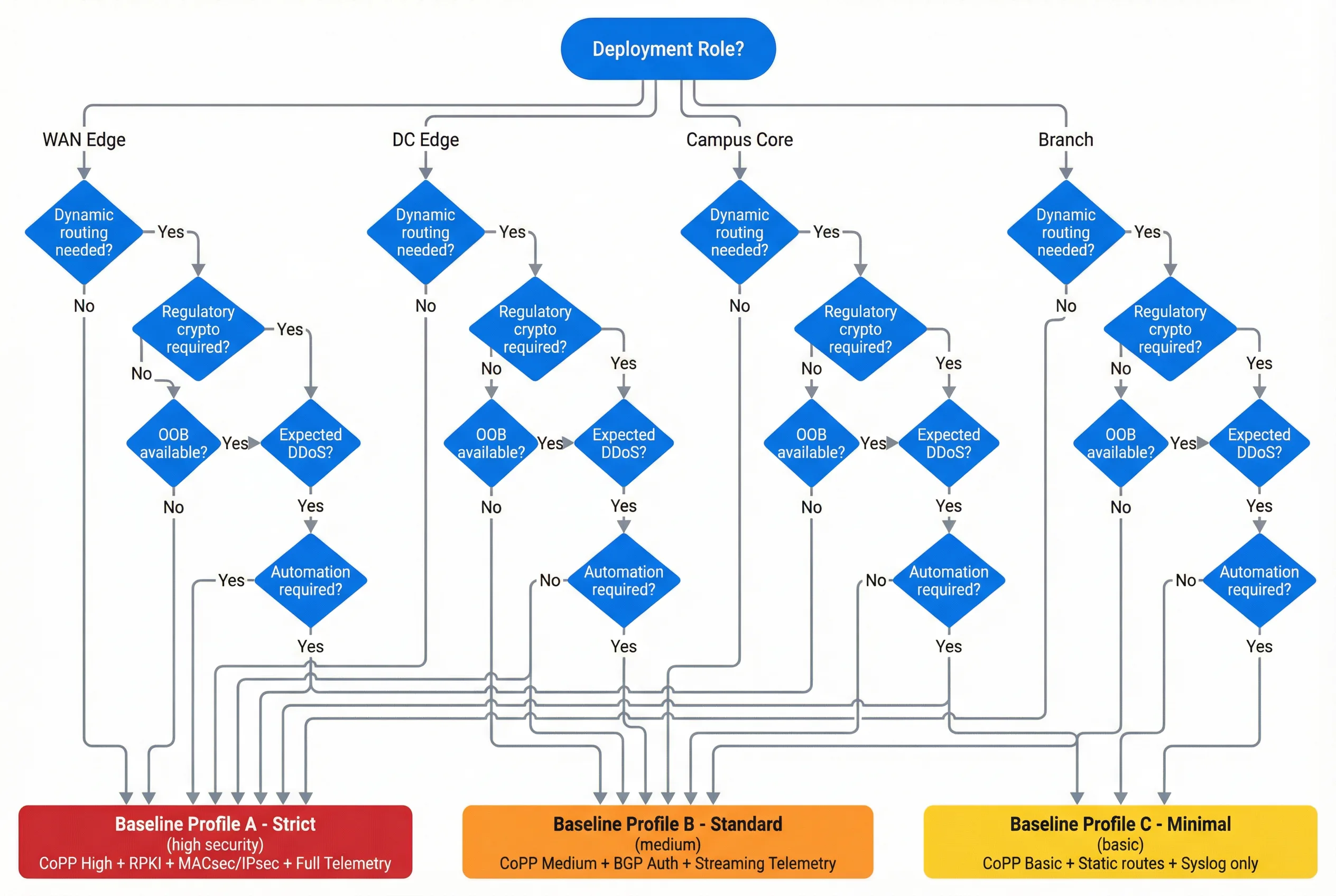

The design selection process follows a structured decision workflow that begins with identifying the deployment role and threat exposure, then progressively narrows down to a specific baseline profile and feature set. The decision tree below illustrates this process, mapping deployment roles and environmental conditions to one of three baseline profiles: Profile A (Strict), Profile B (Standard), or Profile C (Minimal).

Decision Steps

- Identify role and threat exposure — WAN Edge, DC Edge, Campus Core, or Branch; assess Internet exposure, regulatory requirements, and operational maturity.

- Decide management approach — OOB (preferred for high-exposure sites) vs. in-band management VRF (acceptable for lower-exposure sites with compensating controls).

- Choose AAA/RBAC model — authentication strength (MFA, certificates), role granularity, and break-glass procedure.

- Choose routing protocols and protection set — authentication, peer ACL, route policy, RPKI, max-prefix thresholds.

- Define data-plane boundary policy — ACL/uRPF/VRF segmentation appropriate to the site's topology and traffic patterns.

- Define recoverability — backup frequency, rollback method, golden image, spare strategy, and RTO target.

- Define monitoring — logs, flows, CPU/punt counters, adjacency state, and alert thresholds.

- Convert to configuration templates + acceptance tests — template-driven deployment with peer review and staged commit.

2.4 Key Design Dimensions

Router security design must balance multiple competing dimensions. The table below summarizes the eight key dimensions that should be evaluated during the design phase, along with the primary considerations and trade-offs for each. No single dimension can be optimized in isolation — the design must find the appropriate balance for the specific deployment context.

| Dimension | Primary Considerations | Key Trade-offs | Design Guidance |

|---|---|---|---|

| Performance / Experience | Forwarding throughput, latency, convergence time, crypto overhead | Security controls (ACL/uRPF/IPsec) add processing overhead | Verify hardware can sustain design throughput with all security features enabled |

| Stability / Reliability | Redundancy, HA, control-plane headroom, MTBF | More security controls = more configuration complexity = more failure modes | Test all failure scenarios; maintain ≥40% CPU headroom in steady state |

| Maintainability | Standardized templates, modular policies, clear comments, safe upgrade path | Highly customized configs are hard to maintain and audit | Template-driven; minimize one-off exceptions; document every deviation |

| Compatibility / Extension | Interface types, routing features, telemetry, automation APIs | Proprietary features create vendor lock-in | Prefer standard protocols; verify feature parity before upgrades |

| LCC / TCO | Licenses, power, spares, maintenance labor, incident cost | Higher upfront security investment reduces incident cost | Profile A costs more upfront but reduces incident cost; Profile B is balanced |

| Energy / Environment | Power draw, cooling, acoustic, heat in closets | Security appliances add power and heat load | Verify power budget and cooling capacity before deployment |

| Compliance | Crypto standards, logging, access controls, retention | Regulatory requirements may mandate specific controls | Map compliance requirements to configuration controls; document evidence |

| Supply Chain | Firmware provenance, SBOM availability, patch cadence | Untrusted firmware is a persistent compromise risk | Verify firmware source; enable secure boot where supported; track CVEs |